Automai Testing – FSLogix vs. Citrix Profile Management

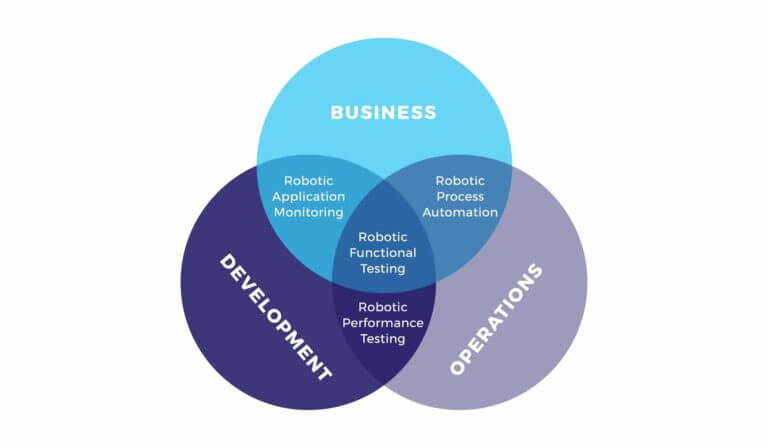

Automai provides tools designed to help administrators and architects of EUC environments assess existing environments, plan and deploy new environments, and continuously monitor and improve deployed environments. Automai conducts regular testing on EUC solutions as part of its commitment to the EUC community. Many profile management solutions are available to the EUC sector; the two